A month ago, Stephen Wolfram put out a little booklet (140 pages) What Is ChatGPT Doing … and Why Does It Work?.

It gives a gentle introduction to large language models and the architecture and training of neural networks.

The entire book is freely available:

- What Is ChatGPT Doing … and Why Does It Work?

- Wolfram|Alpha as the Way to Bring Computational Knowledge Superpowers to ChatGPT

The advantage of these online texts is that you can click on any of the images, copy their content into a Mathematica notebook, and play with the code.

This really gives a good idea of how an extremely simplified version of ChatGPT (based on GPT-2) works.

Downloading the model (within Mathematica) uses about 500Mb, but afterwards you can complete any prompt quickly, and see how the results change if you turn up the ‘temperature’.

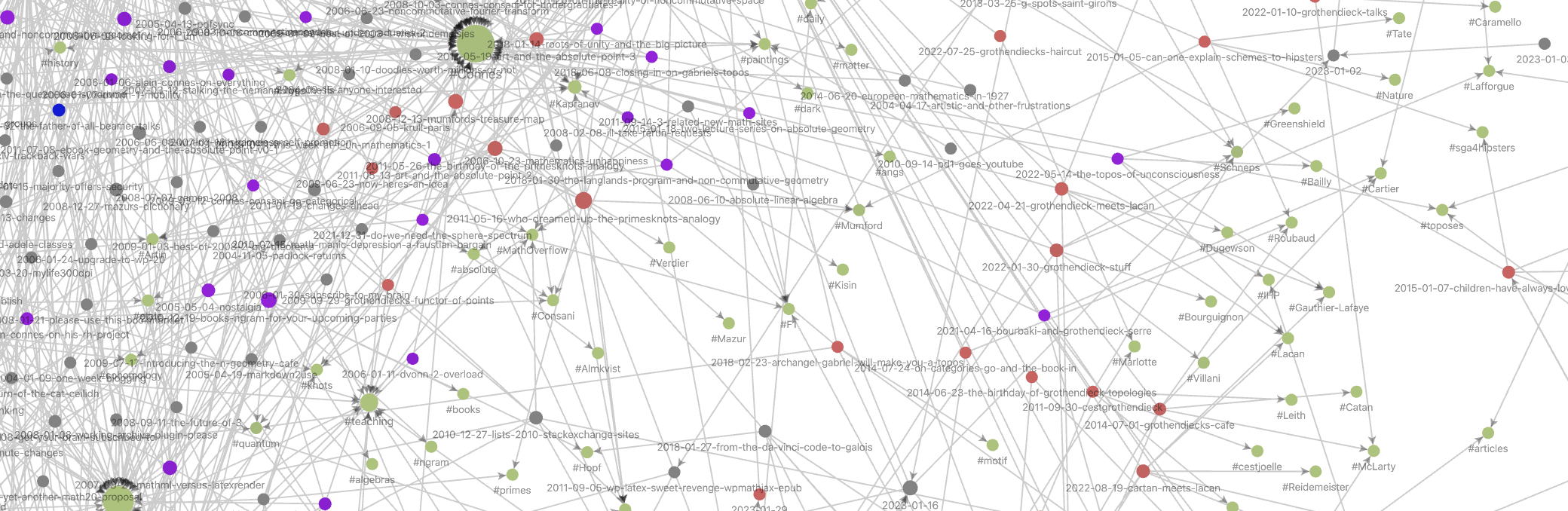

You should’t expect too much from this model. Here’s what it came up with from the prompt “The major results obtained by non-commutative geometry include …” after 20 steps, at temperature 0.8:

NestList[StringJoin[#, model[#, {"RandomSample", "Temperature" -> 0.8}]] &,

"The major results obtained by non-commutative geometry include ", 20]

The major results obtained by non-commutative geometry include vernacular accuracy of math and arithmetic, a stable balance between simplicity and complexity and a relatively low level of violence.

Lol.

In the more philosophical sections of the book, Wolfram speculates about the secret rules of language that ChatGPT must have found if we want to explain its apparent succes. One of these rules, he argues, must be the ‘logic’ of languages:

But is there a general way to tell if a sentence is meaningful? There’s no traditional overall theory for that. But it’s something that one can think of ChatGPT as having implicitly “developed a theory for” after being trained with billions of (presumably meaningful) sentences from the web, etc.

What might this theory be like? Well, there’s one tiny corner that’s basically been known for two millennia, and that’s logic. And certainly in the syllogistic form in which Aristotle discovered it, logic is basically a way of saying that sentences that follow certain patterns are reasonable, while others are not.

Something else ChatGPT may have discovered are language’s ‘semantic laws of motion’, being able to complete sentences by following ‘geodesics’:

And, yes, this seems like a mess—and doesn’t do anything to particularly encourage the idea that one can expect to identify “mathematical-physics-like” “semantic laws of motion” by empirically studying “what ChatGPT is doing inside”. But perhaps we’re just looking at the “wrong variables” (or wrong coordinate system) and if only we looked at the right one, we’d immediately see that ChatGPT is doing something “mathematical-physics-simple” like following geodesics. But as of now, we’re not ready to “empirically decode” from its “internal behavior” what ChatGPT has “discovered” about how human language is “put together”.

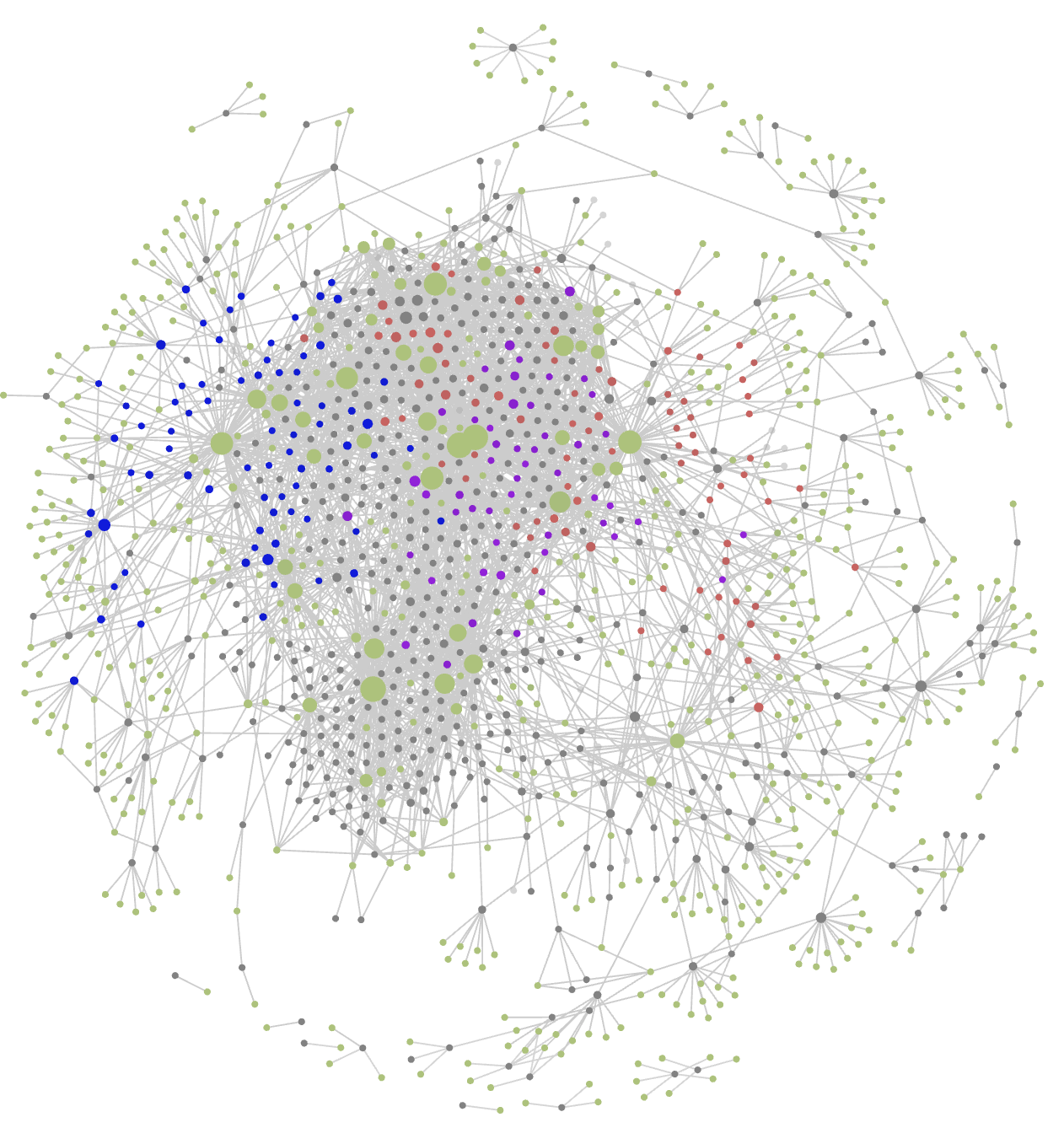

So, the ‘hidden secret’ of successful large language models may very well be a combination of logic and geometry. Does this sound familiar?

If you prefer watching YouTube over reading a book, or if you want to see the examples in action, here’s a video by Stephen Wolfram. The stream starts about 10 minutes into the clip, and the whole lecture is pretty long, well over 3 hours (about as long as it takes to read What Is ChatGPT Doing … and Why Does It Work?).

Leave a Comment