A nice interview with Jacques Roubaud (the guy responsible for Bourbaki’s death announcement) in the courtyard of the ENS. He talks about go, categories, the composition of his book $\in$ and, of course, Grothendieck and Bourbaki.

Clearly there are pop-math books like dedicated to $\pi$ or $e$, but I don’t know just one novel having as its title a single mathematical symbol : $\in$ by Jacques Roubaud, which appeared in 1967.

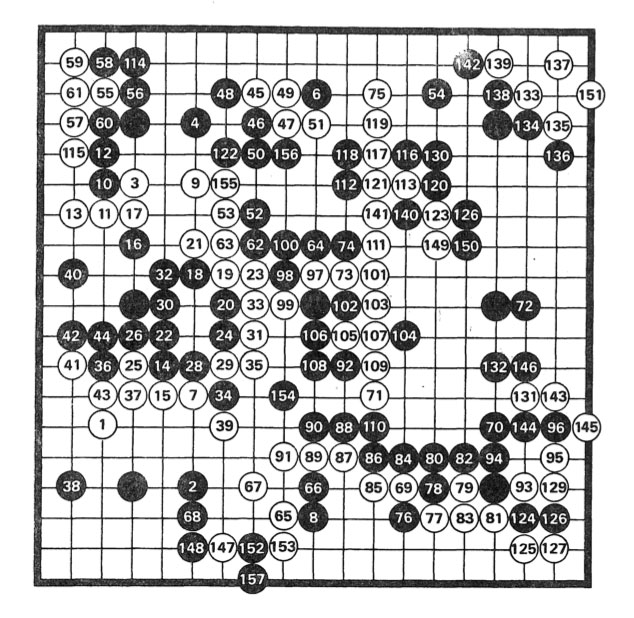

The book consists of 361 small texts, 180 for the white stones and 181 for the black stones in a game of go, between Masami Shinohara (8th dan) and Mitsuo Takei (2nd Kyu). Here’s the game:

In the interview, Roubaud tells that go became quite popular in the mid sixties among French mathematicians, or at least those in the circle of Chevalley, who discovered the game in Japan and became a go-envangelist on his return to Paris.

In the preface to $\in$, the reader is invited to read it in a variety of possible ways. Either by paying attention to certain groupings of stones on the board, the corresponding texts sharing a common theme. Or, by reading them in order of how the go-game evolved (the numbering of white and black stones is not the same as the texts appearing in the book, fortunately there’s a conversion table on pages 153-155).

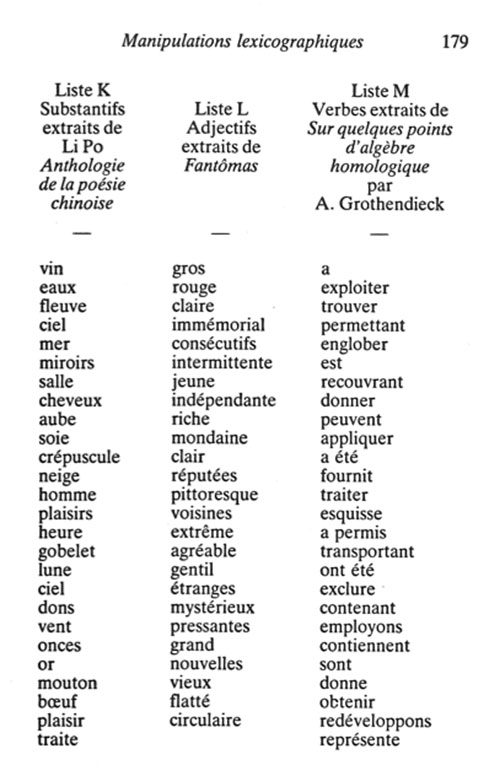

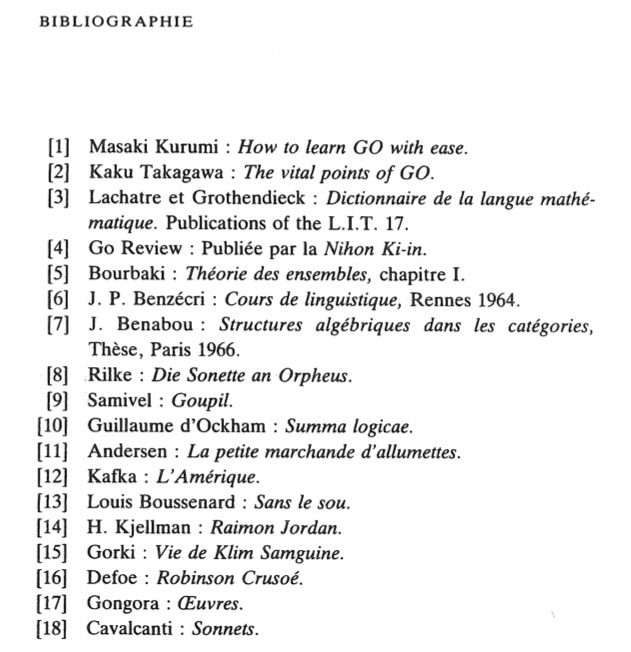

Or you can read them by paragraph, and each paragraph has as its title a mathematical symbol. We have $\in$, $\supset$, $\Box$, Hilbert’s $\tau$ and an imagined symbol ‘Symbole de la réflexion’, which are two mirrored and overlapping $\in$’s. For more information, thereader should consult the “Dictionnaire de la langue mathématique” by Lachatre and … Grothendieck.

According to the ‘bibliographie’ below it is number 17 in the ‘Publications of the L.I.T’.

Other ‘odd’ books in the list are: Bourbaki’s book on set theory, the thesis of Jean Benabou (who is responsible for Roubaud’s conversion from solving the exercises in Bourbaki to doing work in category theory. Roubaud also claims in the interview that category theory inspired him in the composition of the book $\in$) and there’s also Guillaume d’Ockham’s ‘Summa logicae’…

Leave a Comment